A Lesson from the Navy

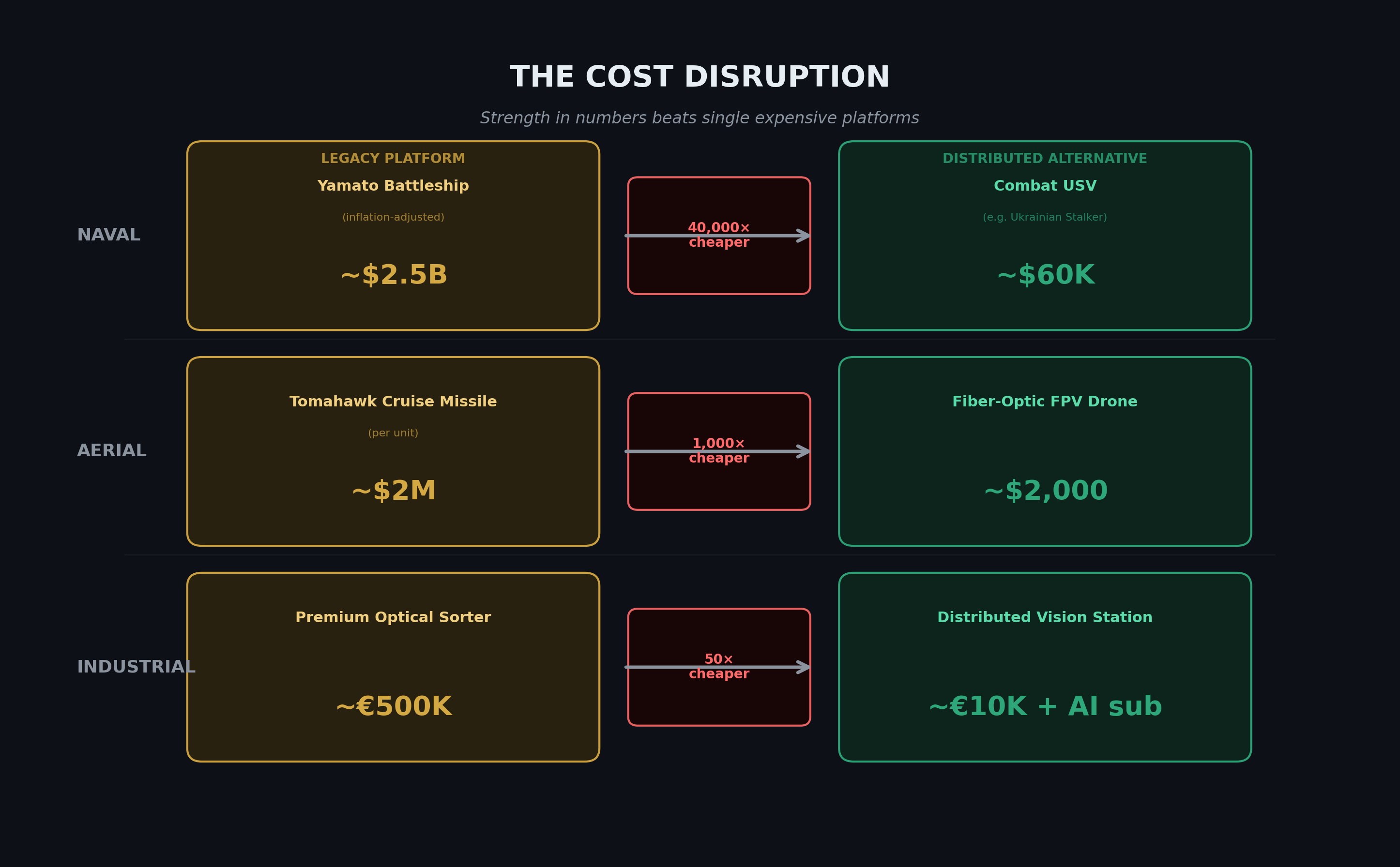

In 1944, the Imperial Japanese Navy sent the Yamato — the largest, most heavily armoured battleship ever built — on a suicide mission to Okinawa. It was sunk by 386 aircraft before it got within firing range. The most expensive naval platform in the Pacific was neutralised by hundreds of cheap, expendable platforms operating as a system.

This wasn't an accident. It was an inflection point. After World War II, every navy on earth shifted its doctrine. The capital ship — the single, expensive, heavily armed platform — gave way to carrier battle groups, destroyer flotillas, and eventually to what we're seeing today: autonomous naval drones costing a fraction of a frigate, deployed in numbers that make individual losses irrelevant.

The same pattern repeats across domains: from singular, expensive platforms to distributed, expendable ones.

The pattern isn't unique to navies. Ukraine has demonstrated it in the air with devastating clarity. A $1.5M cruise missile can be neutralised by electronic warfare or a point-defence system. But 500 fiber-optic FPV drones at $2,000 each? You can't stop all of them. You don't need to. Only one needs to hit.

The dominant platform always gets smaller, cheaper, and more numerous. It happened at sea. It happened in the air. It's happening on the factory floor.

The Industrial Parallel Nobody's Talking About

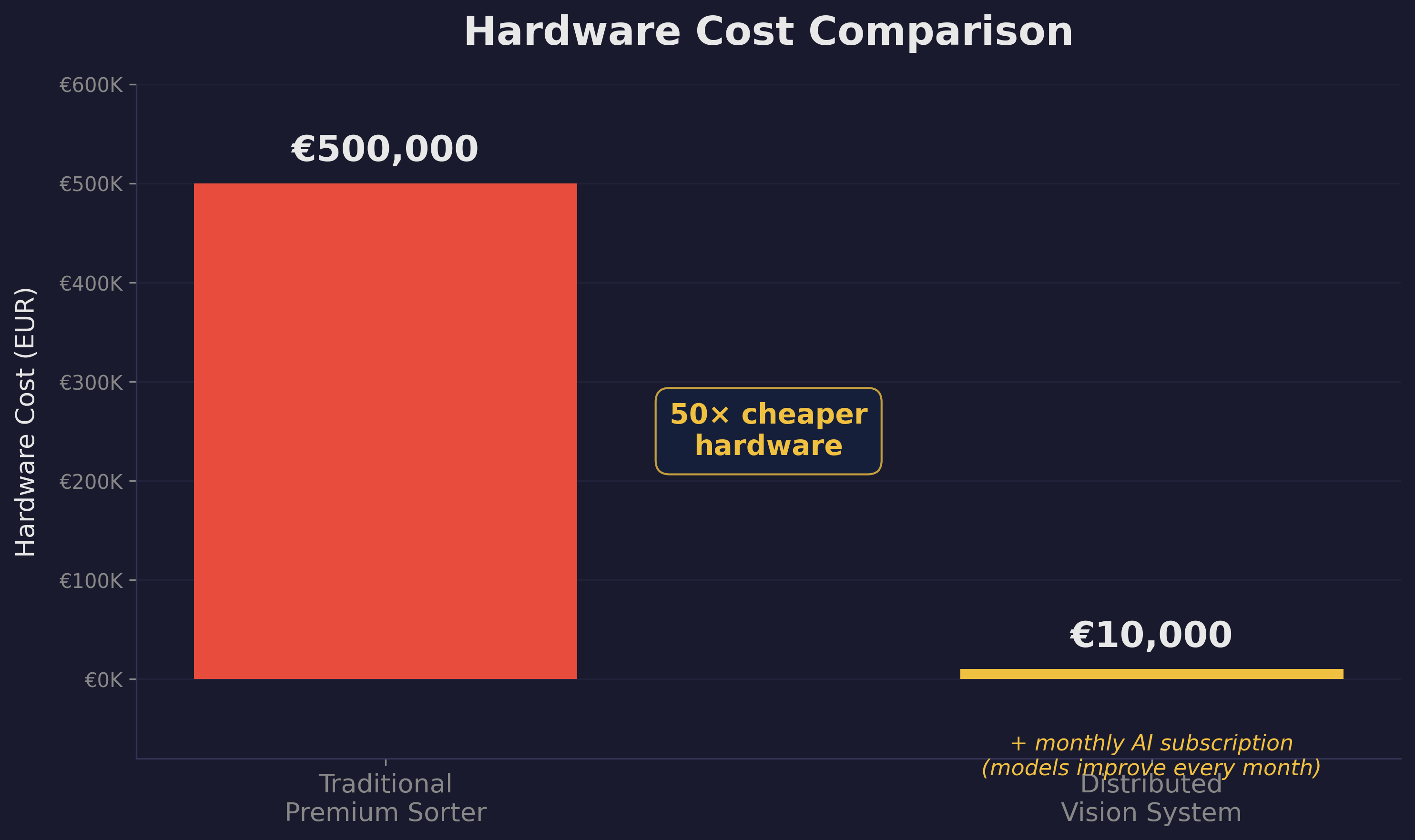

I work in optical food sorting — the business of using cameras, lasers, and algorithms to remove defects from food streams at high speed. It's a mature industry, and the current paradigm looks a lot like a battleship: one large, expensive sorting machine per production line. A premium optical sorter runs around €500,000. It's a precision instrument with proprietary optics, custom illumination, and a closed software ecosystem.

These machines achieve 99%+ detection accuracy. Which sounds nearly perfect — until you calculate what even 1% of defects getting through means at industrial throughput. And if that single machine goes down — for maintenance, for a software fault, for a sensor failure — the line stops. No redundancy. Single point of failure.

A €500K premium sorter vs. a ~€10K distributed camera system with monthly AI subscription. The economics speak for themselves.

Now consider the alternative. A distributed vision system — multiple industrial cameras spread across a conveyor belt — costs roughly €10,000 in hardware. The intelligence layer runs on a subscription model: AI algorithms updated monthly, continuously improving, adapting to your specific production line. No six-figure capital expenditure. No locked-in proprietary ecosystem.

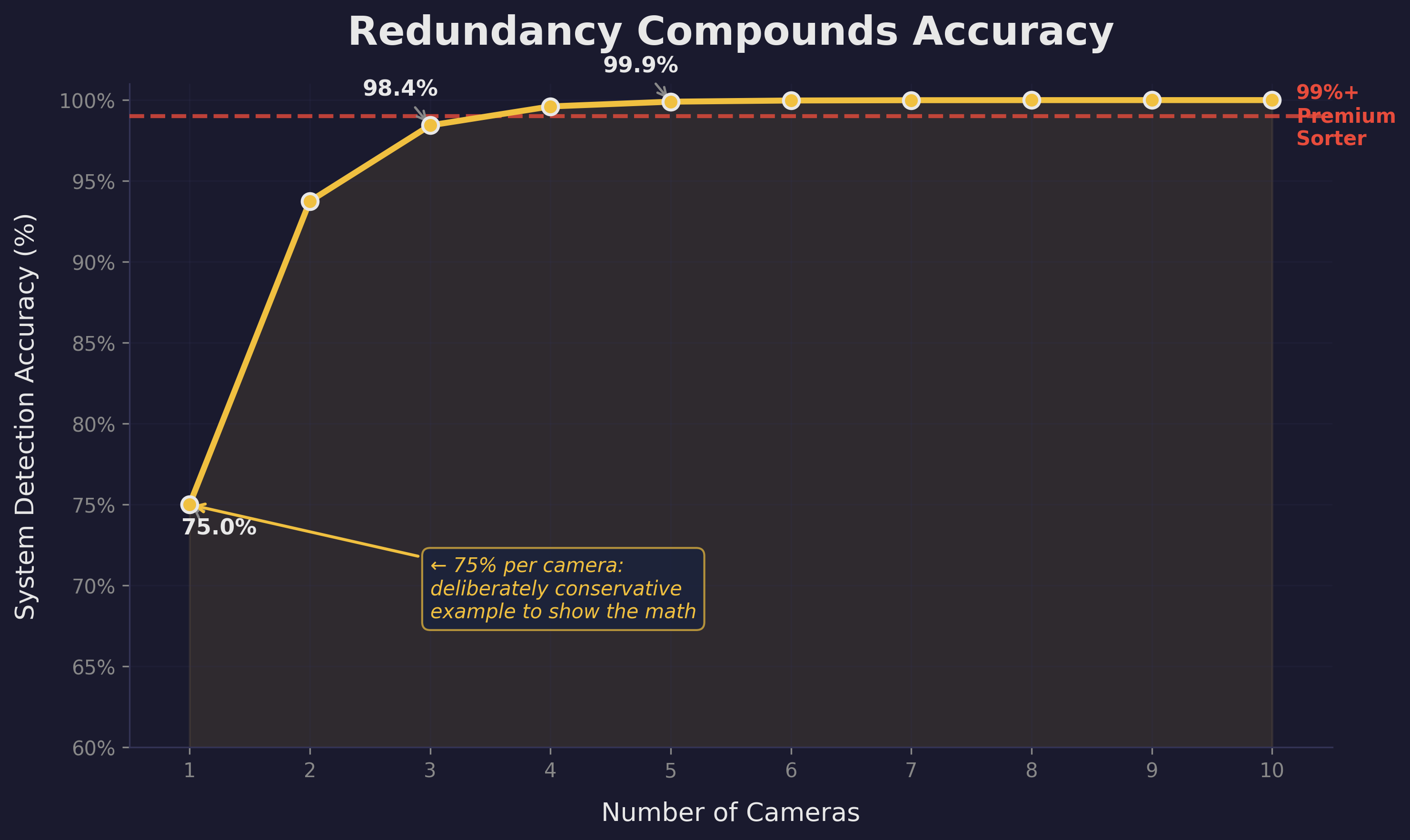

But can cheap cameras match a premium sorter's accuracy? Let's take a deliberately conservative example to illustrate the maths.

The Math of Redundancy

Assume a single cheap camera has just 75% detection accuracy. That's intentionally low — a worst-case scenario to make the point. The probability of it missing a defect is 25%. Two independent cameras? The probability of both missing it drops to 6.25%. Three cameras: 1.6%. By the time you have five cameras looking at the same product stream, the probability of all five missing a defect is 0.1%.

Five cheap cameras with mediocre individual performance deliver 99.9% system detection accuracy. That surpasses even the premium sorter's 99%+ baseline. At a fraction of the cost. And the AI subscription means those algorithms keep improving month after month — the system gets better over time, not obsolete.

Even starting from a conservative 75% per camera, system accuracy compounds rapidly. By camera 5, you've surpassed the premium sorter baseline.

This is the same maths that makes drone swarms work. The same maths that naval strategists understood when they moved from battleships to carriers to distributed fleets. Individual unit performance matters less than system performance. And system performance scales with numbers, not with unit cost.

Detection doesn't have to be perfect anymore. Many imperfect sensors plus good algorithms beat one almost perfect sensor every time.

The AI Multiplier

This is where cheap compute and modern AI close the loop. A premium sorter uses a proprietary algorithm finely tuned to its specific optics. It has to — the algorithm is compensating for the limitations of exactly one sensor viewing exactly one angle.

A distributed camera system does something fundamentally different. Multiple cameras generate multiple slightly different perspectives. A lightweight neural network running on edge compute can cross-reference detections across cameras, compensate for individual weaknesses, and learn continuously from the aggregate data stream. The algorithm doesn't need each camera to be perfect. It needs the system to be redundant.

And here's the key advantage of the subscription model: those AI models improve every month. New defect types, better accuracy, optimised for your specific product stream — all delivered as software updates, not hardware replacements. The premium sorter's algorithm improves only when the manufacturer pushes an update on their schedule, if at all.

More Than Sorting

The real advantage of distributed cameras isn't just better defect detection at lower cost. It's what the intelligence layer does once you stop thinking about sorting as a single-machine job.

Spread cameras across a production line and you eliminate blind spots entirely. The same hardware doing defect detection is also watching for foreign objects, tracking contamination events, estimating yield — not because you added those features deliberately, but because the data is already there. You're not buying a sorter anymore. You're buying plant-wide visibility that happens to sort.

That visibility changes what's detectable. When one camera starts seeing more defects than its neighbours, something upstream has changed — a batch variance, a blade wearing out, a process parameter drifting. A distributed system catches that signal before a quality inspector would. It flags deviations in real time, not at end-of-shift audit.

And when a single camera fails — because eventually one will — the others keep running. There's no emergency service call, no line stoppage, no waiting for a field engineer. Swap the unit at the next planned stop. This is graceful degradation: the system degrades slowly and visibly rather than catastrophically and all at once. The premium sorter offers no equivalent. When it goes down, the line stops.

Finally, every sensor feeds data back into the model. Every defect caught — and every miss flagged by a downstream camera — sharpens the algorithm. The system gets better every month, not by hardware replacement but by the subscription updates rolling in automatically. The premium sorter's algorithm improves on the manufacturer's schedule, if at all. The distributed system improves on yours.

It's Already Happening — Most People Just Haven't Noticed

The suppliers are still building battleships. The established sorting machine manufacturers have spent decades optimising large, expensive, monolithic platforms. Their entire business model — high-margin capital equipment, proprietary service contracts, closed software ecosystems — depends on customers continuing to buy the battleship. They have no incentive to cannibalise their own product lines, even as the economics shift beneath them. Customers would buy smaller, better, cheaper systems today if someone offered them. The incumbents won't, because it would destroy their margins.

If the maths is this clear, why aren't factories already full of distributed vision systems? Some are. The shift is underway, but it's quiet — and the people who should be paying attention are often the last to see it.

Integration is getting easier. A distributed system used to require significant integration work — cameras, edge compute, networking, software. That barrier is shrinking fast. Edge AI platforms are maturing, system integrators are entering the space, and the subscription model means customers don't need in-house AI expertise. The plug-and-play gap is closing.

Regulation is catching up. Food safety requires validated detection performance. A single certified machine was historically easier to validate than a distributed system. But the validation frameworks are evolving — and the data from distributed systems actually makes validation easier. More data points, more transparency, more auditability. The regulatory argument is becoming an advantage, not a barrier.

The battleship vendors will tell you their platform is indispensable. They always do. They're protecting their business model, not solving your problem.

The Pattern

Singular to plural. Expensive to cheap. Centralised to distributed. Fragile to resilient. It happened in naval warfare. It's happening in aerial combat. It's arriving on the factory floor — and the incumbents building battleships are the last ones who'll admit it.

The Yamato wasn't sunk because it was poorly built. It was sunk because the logic of the battlefield had already moved on. Three hundred and eighty-six aircraft didn't care how thick the armour was. The platform was irrelevant. The doctrine was obsolete. The admirals who ordered it into battle knew, on some level, that it was already over — they called it a Special Sea Attack Force because they had no better name for a suicide mission dressed up as strategy.

The battleship vendors on your factory floor are in the same position. Their platforms aren't indispensable. Their margins are a doctrine past its expiry date. The companies that recognise this early will build production systems that are cheaper, more resilient, and more intelligent than anything a single premium machine can deliver. The ones that don't will keep commissioning Yamatos — and wondering, at the end, why the numbers never added up.